From Drafting Assistant to Enterprise Engine

Most teams are using AI for requirements like this:

Prompt → Output → Copy → Done.

It works — until it doesn’t.

Long conversations become bloated.

Context drifts.

Standards soften.

Governance disappears.

Rework increases.

AI doesn’t usually fail loudly.

It fails quietly — through entropy.

Over the past year, I’ve been designing what I call an AI-Enabled Requirements Operating Model — a structured approach that moves AI from “helpful drafting assistant” to a disciplined enterprise engine.

The difference isn’t the tool.

It’s the operating system around the tool.

The Core Insight

AI can either:

- Accelerate chaosor

- Enforce clarity

It depends entirely on structure.

If you treat AI like a smart intern, you’ll get inconsistent output.

If you treat AI like an enforcement engine inside a defined operating architecture, you’ll get disciplined acceleration.

That distinction changes everything.

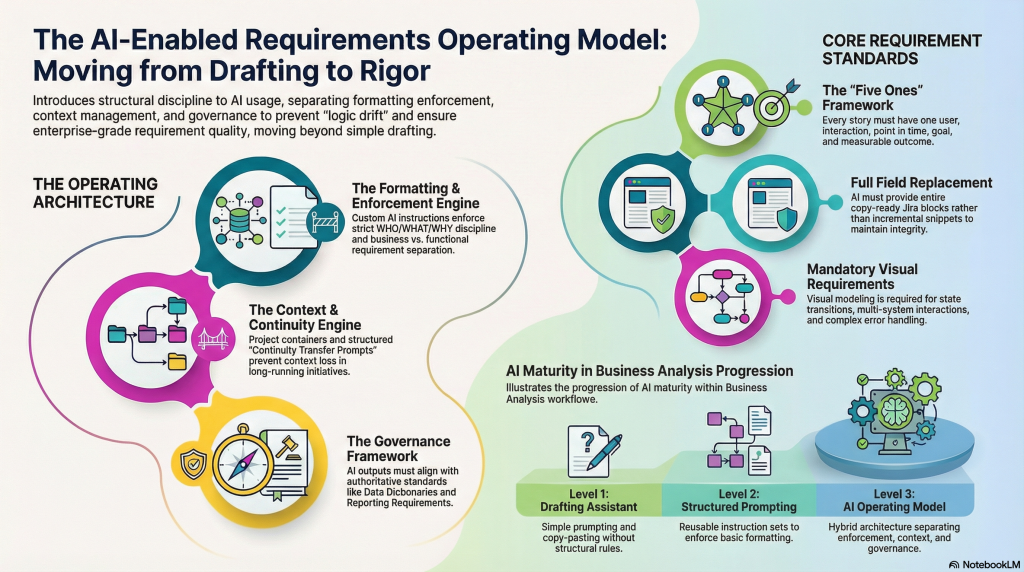

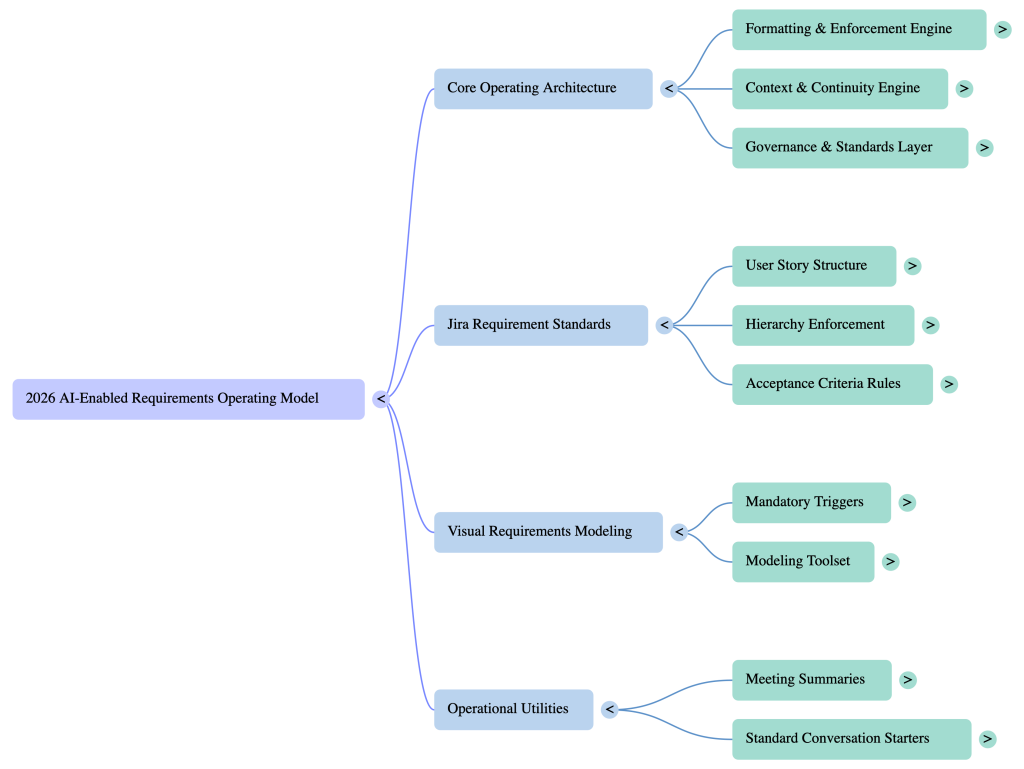

The Operating Architecture

The model separates three independent but connected layers:

1. Formatting & Enforcement Engine

2. Context & Continuity Engine

3. Governance & Standards Layer

When these are blended inside one long chat, performance and discipline degrade.

When they are separated intentionally, AI becomes reliable at scale.

1. Formatting & Enforcement Engine

This is where structured discipline lives.

Requirements must enforce:

- Clear WHO / WHAT / WHY user story structure

- Separation of business vs functional intent

- Directive, testable acceptance criteria

- Hierarchy enforcement (initiative → epic → story)

- Full-field replacement edits (not incremental patchwork)

AI is extremely strong at enforcement — when rules are explicit.

If you don’t define enforcement, AI improvises structure.

Improvisation is the enemy of enterprise rigor.

2. Context & Continuity Engine

Long-running initiatives break AI conversations.

Chats grow too long.

Performance slows.

Decision traceability disappears.

Logic fragments across sessions.

Instead of one infinite thread, context must be architected.

That means:

- Structured project containers

- Defined chat roles

- Context anchor sessions

- Explicit knowledge transfer prompts

- Controlled rotation of conversations

AI does not maintain enterprise memory automatically.

You have to design continuity deliberately.

3. Governance & Standards Layer

AI must operate inside authoritative standards — not invent them.

That includes:

- Business requirement frameworks

- Reporting structures

- Data definitions

- Integration boundaries

- Visual modeling triggers

If governance is implied instead of enforced, AI gradually drifts from compliance.

And drift creates risk.

AI should amplify your standards — not weaken them.

The Hybrid Model

At scale, the strongest pattern is hybrid:

A structured enforcement engine for precision

Combined with

A structured context engine for long-running initiatives

Together, they form what I describe as:

An Enterprise Requirements Operating System

AI becomes a disciplined collaborator.

Not just a drafting assistant.

Maturity Levels of AI in Business Analysis

Most organizations are somewhere on this spectrum:

Level 1 – Drafting Assistant

“Write me a user story.”

Level 2 – Structured Prompting

Reusable instructions, better formatting.

Level 3 – AI Operating Model

Separated enforcement, continuity, and governance architecture.

Enterprise capability begins at Level 3.

Visual Modeling Still Matters

AI-generated text does not replace visual clarity.

When:

- Multiple systems interact

- State transitions exist

- Role-based behavior differs

- Error handling must be precise

Visual modeling reduces ambiguity before decomposition.

AI and structured visuals are complementary.

Not competitive.

Why This Matters for Leaders

The real leadership question is not:

“Should we use AI?”

It’s:

“Are we architecting AI usage responsibly?”

Without structure, AI accelerates entropy.

With structure, AI accelerates clarity.

AI does not remove the need for discipline.

It amplifies whatever discipline you build around it.

Final Thought

Most professionals think AI is about speed.

It isn’t.

It’s about structural amplification.

If you design the operating model correctly, AI becomes a force multiplier for rigor.

If you don’t, it becomes a subtle source of drift.

The difference is not the tool.

It’s the architecture.